Thank You for Doom Scrolling

Inside the trial that could cost Silicon Valley billions

In 2006, Hollywood released a movie about a man whose job was to make cigarettes sound safe.

Nick Naylor, the fictional tobacco lobbyist at the center of “Thank You for Smoking,” had a talent for one specific argument. The cigarettes don’t kill people. People choose to smoke. Personal responsibility. The product is fine. It’s the choices that are the problem.

Twenty years later, Mark Zuckerberg sat in a Los Angeles courtroom in February 2026 and made the same argument. Instagram doesn’t harm teenagers. Teenagers harm themselves by how they use it. The platform is fine. It’s the behavior that’s the problem.

He had eight hours on the stand. Eight hours defending a product that a jury in that same courtroom is deciding might be defective, the same way a car with bad brakes is defective. Not the posts. The platform itself.

The platform’s core defect?

The notification loop.

The algorithm that figures out, within days, what makes a 13-year-old girl feel ugly, and then feeds her more of it.

The lawsuit targets the product itself. Whether Instagram was built to be addictive, and whether the company knew.

It’s the first time a CEO of a major tech company has testified under oath about how his product was designed. The product is finally on trial.

The defense Zuckerberg is using has been tried before. By an industry that used it for fifty years before the courts caught up.

The Playbook

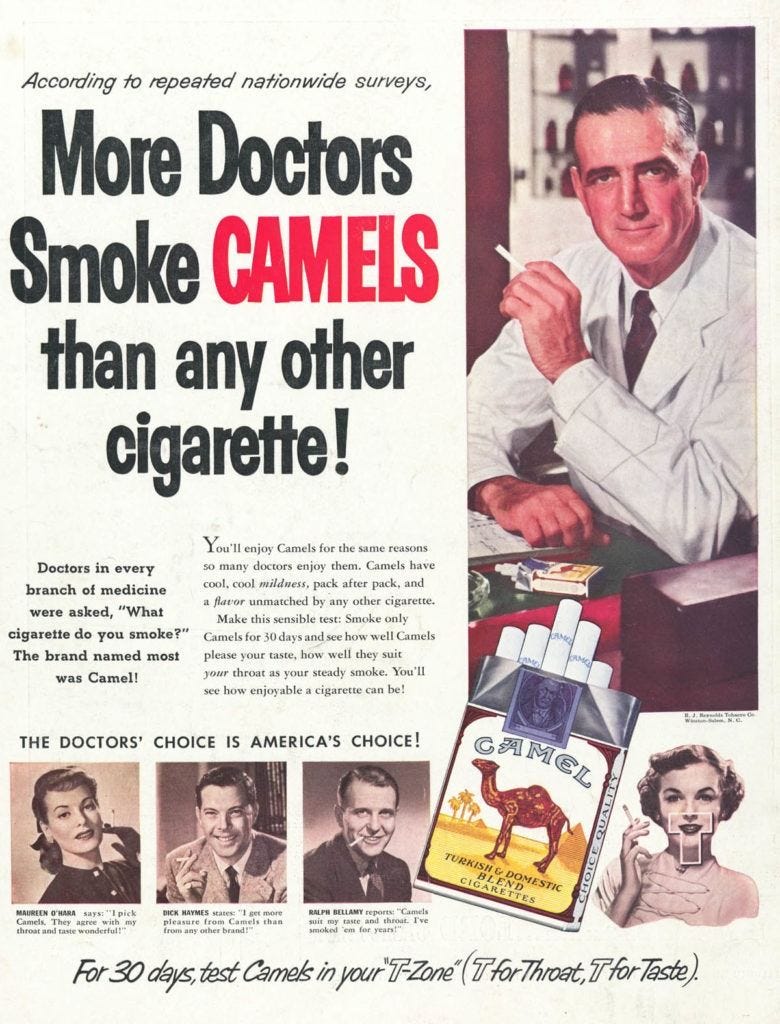

Big Tobacco knew cigarettes caused cancer by the 1950s. Internal research proved it. The companies buried the findings and hired scientists to cast doubt. For decades, their public position was simple: no link between smoking and disease had been established. Meanwhile, their chemists were busy engineering cigarettes to deliver nicotine faster and more efficiently.

They went after teenagers. First with Joe Camel as a cartoon, then with Candy-flavored cigarettes. The pitch was always dressed up as freedom and cool, never addiction. When the lawsuits came, their lawyers made a clean argument: nobody forced anyone to light up.

Their defense almost worked.

Meta is hoping that same defense can work this time around.

In 2019, Meta’s own researchers concluded the company made body image issues worse for one in three teen girls. That stayed internal.

A 2020 internal document found that 11-year-olds were four times as likely to return to Meta’s apps repeatedly compared to older users. The company flagged this as an opportunity, not a warning.

An internal strategy document put it plainly: “If we want to win big with teens, we must bring them in as tweens.” Internal targets were set to push daily engagement for 10-year-olds to 40 minutes by 2023.

They knew. They planned around it. And at least one employee saw the parallel coming. An internal message, later entered as evidence, asked: “If the results are bad and we don’t publish and they leak, is it going to look like tobacco companies doing research and knowing cigs were bad and then keeping that info to themselves?”

“If we want to win big with teens, we must bring them in as tweens.” - Meta Internal Memo

Yes. That’s exactly what it looks like.

Frances Haugen left Meta in 2021 and took tens of thousands of internal documents with her. Those documents showed the gap between what the company said publicly and what it knew privately.

In September 2025, four whistleblowers testified against Meta, including Dr. Jason Sattizahn and Cayce Savage. They told the court that Meta’s legal department altered teen safety research. Then the company hid the altered findings behind attorney-client privilege, a legal shield designed to keep lawyer communications out of court.

They didn’t just ignore the research. They worked to make it disappear.

The tobacco industry’s own cancer research became the smoking gun in court. It showed the gap between what they knew and what they said. Meta’s teen mental health studies are sitting in the same position now.

The Shield

So how did this take so long?

In 1996, Congress passed Section 230 of the Communications Decency Act. Twenty-six words that became the most important sentence in internet law: “No provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider.”

Plain English: don’t sue the bookstore for what’s in the books. Don’t blame the phone company for what callers say. The platform isn’t the author.

It was a sensible rule for 1996. The internet was message boards and AOL. Platforms hosted speech but didn’t control it. They passed things along. They didn’t choose what you saw.

That’s not what Instagram is.

Instagram’s algorithm watches you.

It learns from you.

Every pause, every click, it adjusts.

If you spend two seconds longer on weight-loss content, it remembers. It serves you more. Then more. Internal Facebook research showed the algorithm had identified vulnerable teenagers and was serving them eating disorder content at three times the rate of other users. Among those vulnerable teens, content related to eating disorders made up 10.5% of what they saw. For everyone else, 3.3%.

The platform didn’t write that content. But it decided who got it. It built the machine that sorted teens by vulnerability and filled their screens accordingly.

Section 230 says platforms aren’t publishers. Technically, that’s still true. But calling Instagram a neutral platform in 2026 is like calling a casino a building where people happen to gamble. The design itself is the product.

That gap, between what Congress wrote Section 230 to protect and what it actually shields today, is where these lawsuits pushed through. In September 2023, Judge Carolyn Kuhl in Los Angeles cracked the shield. She found that Section 230 did not protect the companies from claims that the product itself was badly designed. The people suing Meta weren’t attacking the content users posted. They were attacking the design of the product itself. The system that decides what you see next. The algorithm that picks and pushes. That’s a different argument, and it found a different answer.

The Broken Product

Section 230 protects publishers. So the plaintiffs stopped trying to prove Meta is one.

They flipped the argument. They aim to prove the product itself is broken. Not what users post. Not what teenagers do with it. The machine that decides what they see next is a problem for teenagers and society.

The plaintiff goes by “Kaley” in court filings. She’s twenty years old. She started using YouTube compulsively at age 6. Moved to Instagram at 9. Her case argues that the platforms made her depression worse and pushed her toward thoughts of suicide, not through the content users posted, but through deliberate design choices baked into the apps themselves.

Infinite scroll. Algorithmic feeds that surfaced more body comparison content the longer she stayed.

Big Tobacco lost on the same logic. For years, cigarette companies hid behind “personal choice.” Adults chose to smoke. Not our fault. That defense collapsed when courts focused on the product. The cigarette was engineered to be addictive, and the marketing was pointed at children. The choice was manufactured.

The algorithm wasn’t serving content. It was engineered to maximize time-on-platform. The notification system was designed to keep pulling you back, the way a slot machine does. Beauty filters were found in previous research to cause harm towards teenage girls. Zuckerberg chose to reinstate them anyway, calling a ban overreaching.

On February 26, while testimony continued, Instagram announced a new feature: alerts sent to parents when their teenager’s account gets flagged. (source needed: Feb 26 Instagram announcement) After years of internal research showing the harm, after whistleblowers and a CEO on the stand, the company’s public response was a notification. A parental ping. The equivalent of a cigarette company printing “please smoke responsibly” on the carton.

On February 26, while testimony continued, Instagram announced a new feature: parents will receive alerts when their teenager repeatedly searches for suicide or self-harm content. After years of internal research showing the harm, after whistleblowers and a CEO on the stand, the company’s public response was a notification. A parental ping. The equivalent of a cigarette company printing “please smoke responsibly” on the carton.

What makes the announcement worse is the feature only works if a parent is enrolled in Meta’s supervision tools. Fewer than one in ten teen accounts have that turned on. And that assumes the account the parent monitors is the only one. Most teenagers keep a second account, a private one their parents never see. Meta knows this. The company built a platform where a thirteen-year-old can create an anonymous account in two minutes. The parental alert doesn’t fix that. It gives Meta something to point to in court.

If the defective product theory holds up in this trial, the legal risk doesn’t stop at Meta. Every app optimized for attention has the same potential liability. Infinite scroll started at Twitter. Auto-play is YouTube’s thing. If this new legal argument wins, then its ramifications applies to all of them.

Careless People

The legal theory targets the product. But someone built it.

F. Scott Fitzgerald wrote one passage that has followed certain people through history. Tom and Daisy Buchanan, he said, were careless people. “They smashed up things and creatures and then retreated back into their money or their vast carelessness, and let other people clean up the mess they had made.”

In January 2024, Zuckerberg sat before the Senate Judiciary Committee. He said the existing body of scientific research had not proved that social media causes mental health harms. His own researchers had been writing the opposite for years inside the company. On beauty filters, his position was that banning them would be overreaching, even with studies already in the public record showing those filters harm teenage girls.

Two years later, he was back on the stand. This time in a Los Angeles courtroom, in front of a jury.

His legal team had coached him to appear “authentic, direct, human, insightful and real.” Zuckerberg acknowledged he’s not great at that. “I think I’m actually well known to be very bad at this,” he told the court.

Then his own people proved the point. Members of his entourage wore Meta AI glasses into a courtroom where recording is prohibited. Judge Kuhl stopped the proceedings and questioned them to see if they were recording the proceedings. “If you have done that, you must delete that, or you will be held in contempt of the court.”

The careless people don’t see the mess. They see engagement metrics and time-on-platform numbers going up.

Plaintiff’s lawyer Mark Lanier unspooled a banner across the room. Somewhere between 35 and 50 feet long. Every Instagram post Kaley Cooper had ever made. Her whole account. Her whole record of being a teenager online.

Zuckerberg sat there and looked at it.

A 50-foot banner of a girl’s posts doesn’t leave much room for retreat.

Now the question is what a jury does with it.

The Verdict That Isn’t About Meta

As of this writing, the first test case is still in session. No verdict yet. But the trial doesn’t end with Meta.

Two more test cases are scheduled: March 9 and May 11. A federal trial brought by school districts is set for June. Over 1,600 plaintiffs are waiting to see what this jury does.

TikTok and Snap settled before anyone got to a verdict.

If this jury finds Meta liable, it opens every one of those pending cases. Bloomberg reported potential settlements “that could total billions, a scenario similar to the deals that tarnished the tobacco and opioid industries.”

Insurance Journal called it social media’s “Big Tobacco moment.”

If product-design claims survive this trial and the inevitable appeals, every app that optimizes for attention is exposed. Every company tracking “daily active users” and “time on platform” as its core success metrics is now tracking liability.

The tobacco industry argued for decades that nobody forced anyone to light up.

Courts eventually stopped buying it.

A jury in Los Angeles is deciding whether social media companies suffer the same fate.